Infostealers ULP Data Is Burning Out SOC Teams and Killing Automation

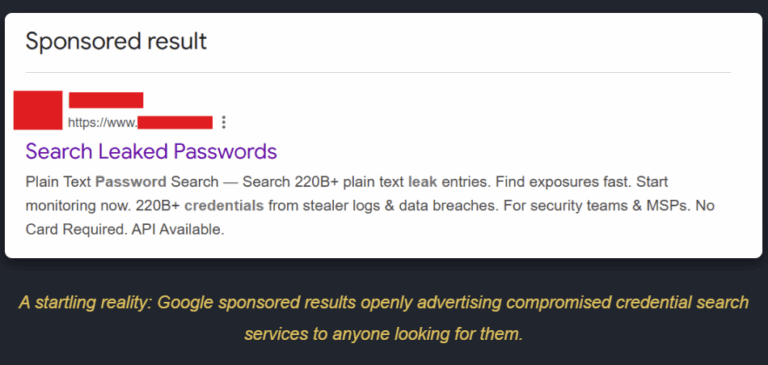

The cybersecurity industry has developed a dangerous dual obsession: unverified automation and using sheer data volume as the ultimate benchmark for success. Vendors routinely boast about monitoring “tens of billions” of leaked records, actively equating raw database size with superior protection. To handle this staggering influx, we have built massive, interconnected systems designed to ingest data and isolate threats at machine speed. The theory is sound: detect a compromised credential, fire a webhook to Okta or Active Directory, and force a password reset before the adversary can act.

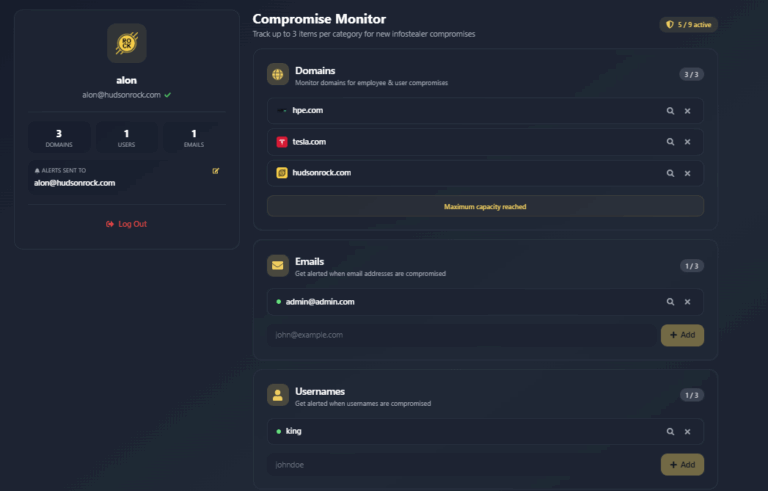

But this theory ignores a massive architectural blind spot. When volume is prioritized over validity, the security ecosystem becomes deeply fragile. At Infostealers.com, the dedicated research division of Hudson Rock, we are issuing a direct call to action: Vendors must stop treating raw URL:Login:Password (ULP) data as high-confidence intelligence and instead require verified provenance from full infostealer logs before triggering automated responses.

Attackers have realized that if they target our automated defense mechanisms with spoofed or recycled data, they can turn our own playbooks into weapons. The “Reset-as-a-Service” attack has moved from a theoretical prank to a global operational vulnerability.

Picture this: Attackers have realized they can weaponize the industry’s volume obsession. By flooding public channels with large volumes of recycled credentials mixed with synthetic or outdated ULP strings, they create repeated spikes in “compromised credential” alerts. Large aggregators ingest the data quickly and syndicate it downstream. The result isn’t one dramatic global shutdown: it’s waves of false-positive alerts hitting thousands of MSSPs and SOAR playbooks. Helpdesks get flooded with reset tickets, SOC teams lose trust in automation, and many organizations are forced to temporarily throttle or disable auto-remediation playbooks. Real attackers love the breathing room this creates.

Phase 1: The Injection

Large volumes of recycled and synthetic ULP lines are ingested by volume-focused intelligence vendors.

Phase 2: The Syndication

Unverified data flows via API to downstream MSSPs and Security Aggregators, bypassing validation.

Phase 3: Helpdesk Overload & Automation Throttling

Waves of false positives trigger ticket storms, forcing organizations to disable auto-remediation playbooks.

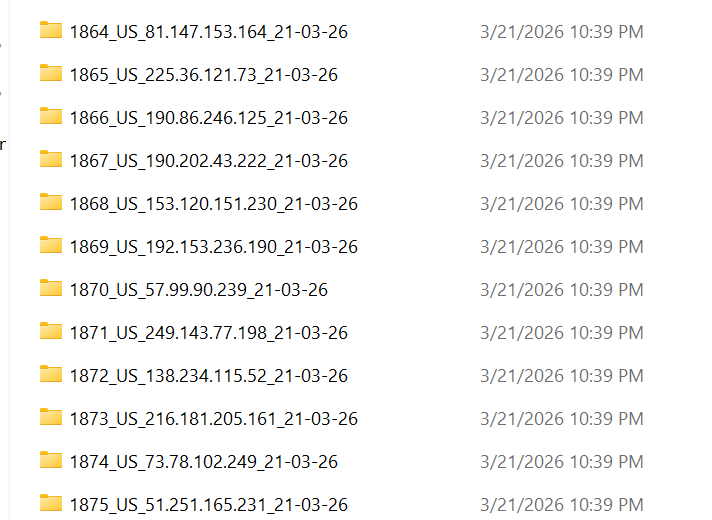

1. The Anatomy of ULP and the Fragmentation of Supply

To understand the vulnerability, we must look at what “threat intelligence” actually means for the majority of the market. The ecosystem functions like a pyramid, and the foundational data format is critically flawed.

Most primary gatherers operate on ULP datasets. ULP stands for URL, Login, and Password. These are plain-text strings, entirely devoid of cryptographic context, extracted and traded endlessly on dark web forums and Telegram channels.

The core issue is that this unverified text degrades as it moves down the pyramid. A poisoned text file injected into a primary ULP source gets scooped up by a large Layer 2 aggregator, which inadvertently hijacks the automated response loops of every downstream MSSP customer in Layer 3.

2. The “Validation Gap” in Downstream Automation

The industry falsely assumes that automation systems validate data before acting on it. While a large intelligence vendor might have deep agent integration to cryptographically validate a leaked password against an Active Directory hash, the downstream ecosystem does not.

An MSSP managing 500 different mid-market clients relies on generic SOAR (Security Orchestration, Automation, and Response) playbooks. At this scale, “Validation” is just Identity Matching (Email + Domain), not Cryptographic Matching. Because there is no hash verification, a spoofed ULP string easily triggers waves of false positives and forced suspension of automation.

3. Real-World Precedents: The Cost of ULP Reliance

When billions of unverified, context-less rows hit the ecosystem, the resulting noise alone is enough to paralyze security operations.

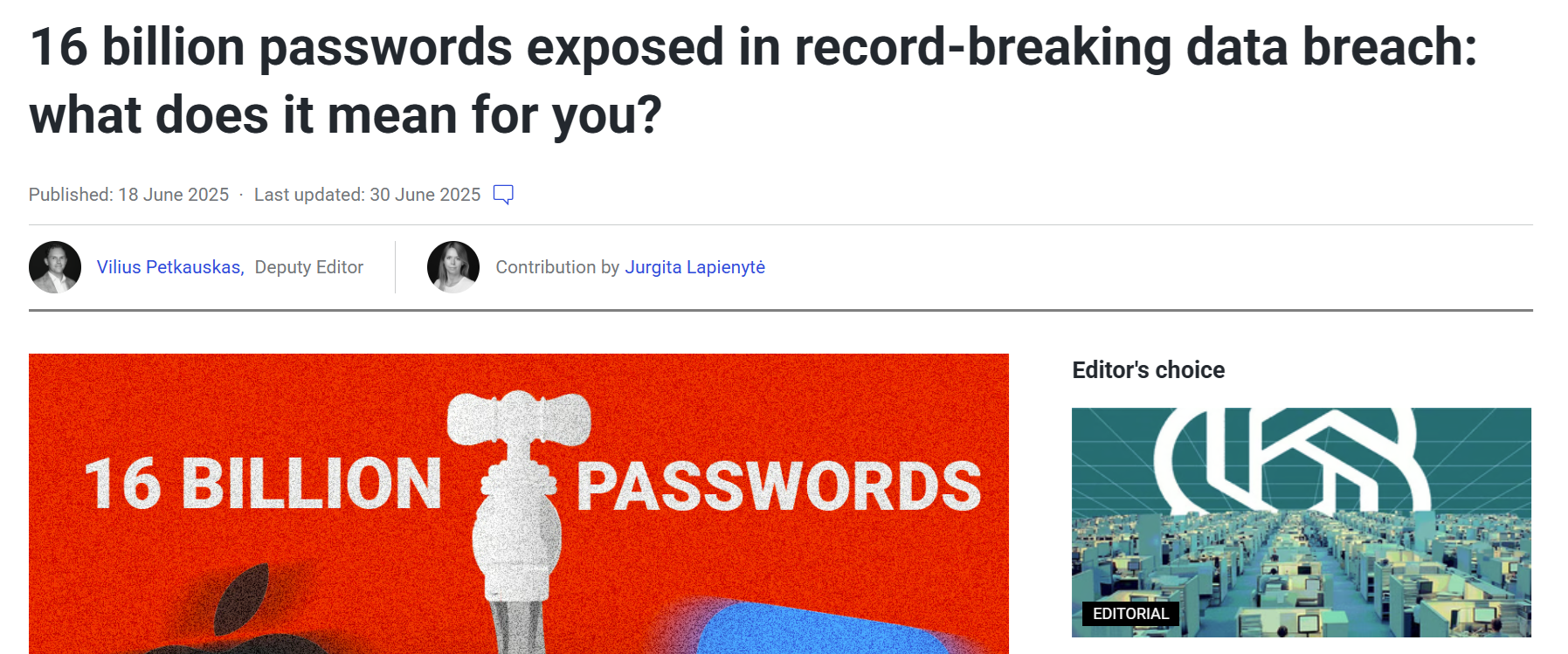

The 16-Billion-Record Chaos

In mid-2025, the cybersecurity community panicked over a massive 16-billion-record credential dump. This was not a targeted poisoning attack, but a sprawling compilation of 30 datasets consisting largely of recycled ULP data. Analytical pipelines choked, and vendors scrambled to determine if major platforms like Google had been breached directly. They had not; the login URLs simply appeared alongside passwords in the raw ULP strings.

The 183-Million “Gmail Breach” Mirage

This exact phenomenon repeated itself in late 2025 with the infamous “Gmail data breach” scare. Mainstream outlets reported that 183 million Gmail accounts were compromised. Google was forced to clarify that the accounts were actually from a compilation of recycled ULP data stolen over years. A staggering 91 percent of these credentials were pre-existing. If downstream MSSPs had tied automated SOAR playbooks to these alerts, they would have triggered helpdesk ticket storms based entirely on stale zombie data.

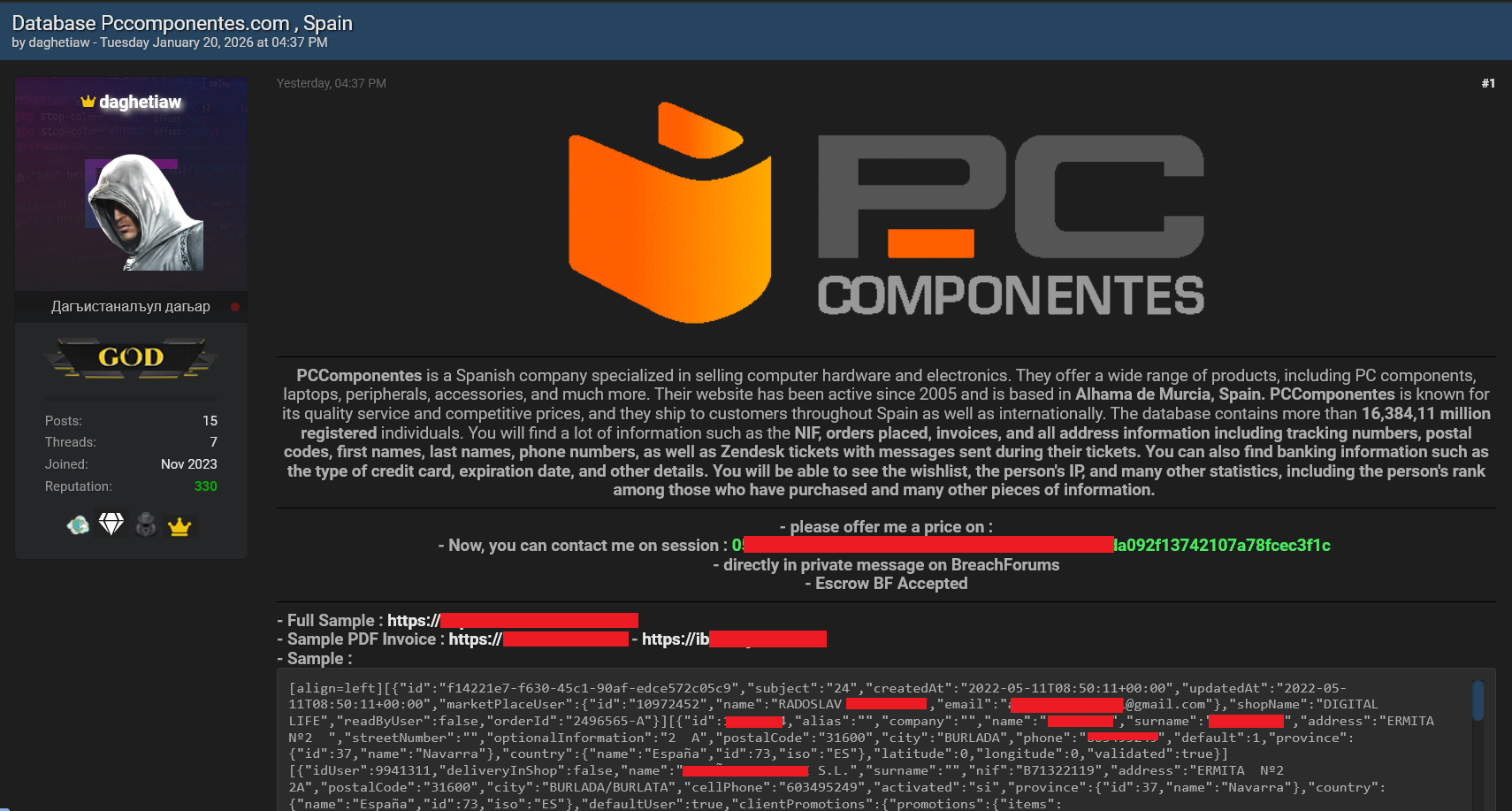

The Weaponization of Old Logs: The PcComponentes “Fake Breach”

This “Validation Gap” is actively being weaponized. In early 2026, a threat actor claimed to have breached the internal infrastructure of PcComponentes, offering a sample of highly sensitive customer data. However, using deep-provenance analysis via CavalierGPT, researchers discovered that every single email matched existing, un-remediated infostealer logs dating back to 2020. The attacker simply aggregated old ULP data, used credential stuffing to access accounts natively, and scraped PII to create a “fake breach.” Monitoring full provenance logs at the time of infection would have rendered this attack impossible.

4. A Practical Provenance Framework

The solution isn’t to throw away all ULP data: it’s to score it properly. Security operations teams can close the validation gap without sacrificing coverage by adopting a tiered trust model:

Only High-Confidence data should trigger automated actions like mass password resets. This simple scoring model resolves the tension between threat visibility and operational stability.

5. The Solution: Transitioning to Full Provenance Logs

The only way to stop this loop of noise, false positives, and weaponized ULP dumps is to mandate Ground Truth data. At Hudson Rock, we operate entirely outside the vulnerable ULP pyramid because we demand provenance scoring and use Full Infostealer Logs for any high-impact action.

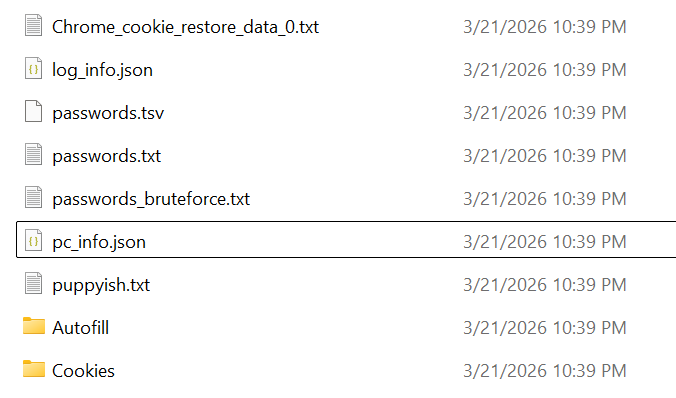

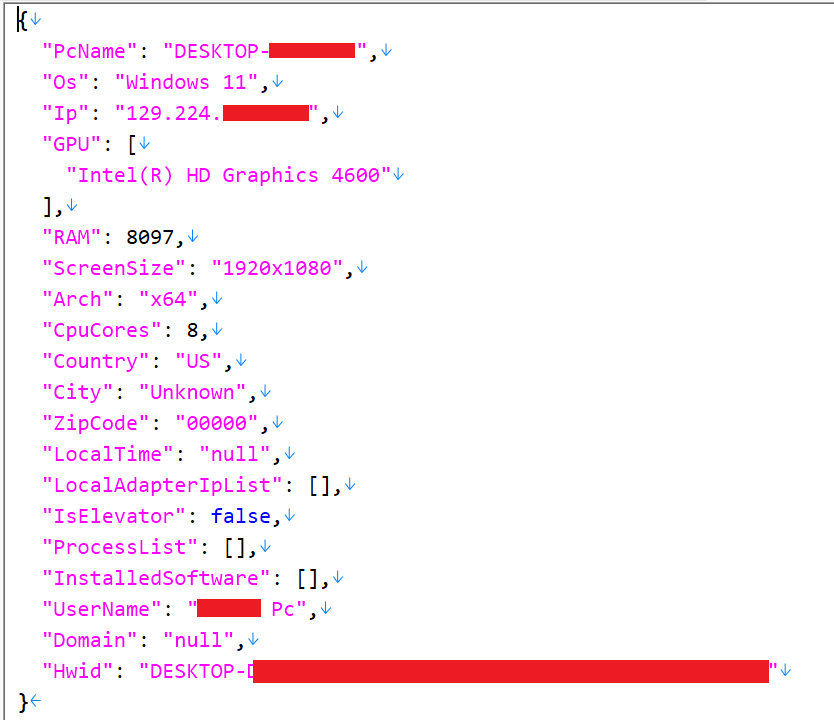

A Full Log is not a text string. It is a comprehensive, cryptographic archive extracted directly from Command and Control (C2) servers. It provides the exact context required to prove a physical device was compromised.

Why raw ULP spoofing fails against Full-Log Provenance

The systemic risk occurs when “Lazy Aggregators” receive high-fidelity logs but strip our metadata to save on database storage. They throw away the system.txt, the IP history, and the hardware IDs, passing only the bare ULP string downstream. They take high-fidelity intelligence, turn it into high-volume noise, and pass a massive vulnerability directly to their own customers.

6. The Downstream Fallout: Operational Fatigue

If a large intelligence vendor is successfully poisoned with a high-accuracy ULP file, the enterprise helpdesk becomes the target. It potentially takes days to manually verify and unlock thousands of users.

The true blast radius of unverified automation

When a poisoned ULP list triggers an automated SOAR playbook, the result is instantaneous ticket storms. A significant portion of the workforce, spanning across HR, Finance, Sales, and Engineering, is flooded with false-positive alerts or locked out of their Single Sign-On (SSO) environments and corporate email. This is not a theoretical inconvenience; it is a self-inflicted operational failure that disrupts business continuity.

Crucially, because this vulnerability is built into the architecture of the intelligence supply chain, the blast radius is not limited to one company. This systemic risk means that the exact same waves of false positives can strike hundreds of different businesses concurrently, simply because they rely on the same compromised Layer 2 aggregator or MSSP credential monitoring feed.

The enterprise IT helpdesk is overwhelmed, potentially taking days to sort valid alerts from the noise. Consequently, Layer 2 and Layer 3 vendors face severe SLA penalties and an erosion of trust during contract renewals. Rather than risking further operational downtime, CISOs are increasingly forced to suspend automated playbooks entirely. Because this attack relies on algorithmic generation, an adversary can continuously trigger operational fatigue that benefits real attackers.

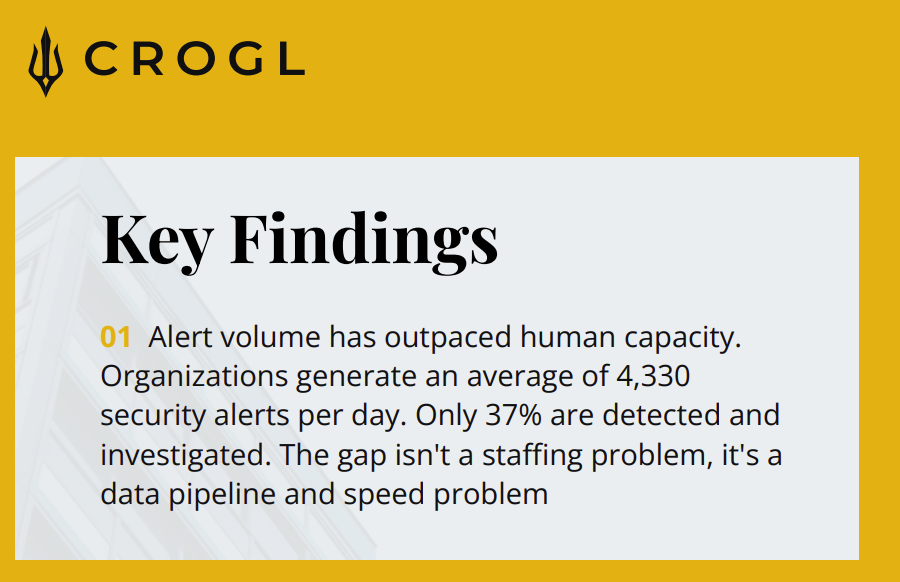

The Mathematical Reality of Alert Fatigue

The operational cost of this noise is staggering. Recent insights shared by cybersecurity firm Crogl reveal the mathematical reality of alert fatigue: modern SOC teams are bombarded with an average of 4,330 alerts per day, with only 37% of them ever being investigated.

When volume-obsessed vendors flood this already broken pipeline with thousands of unverified, recycled ULP strings, it doesn’t improve security. It simply guarantees that mathematically verified, high-severity threats are buried under an avalanche of false positives, giving real adversaries the exact breathing room they need to execute an attack.

Conclusion: Security Vendors Must Evolve

This is a call to action for the entire intelligence supply chain. The combination of massive polluted datasets and unverified automation has created preventable operational pain.

Security vendors should evolve from volume-based to provenance-based intelligence. By clearly scoring data quality and reserving aggressive automation for mathematically verified infections, the industry can eliminate this dangerous gap and finally make credential monitoring trustworthy again.

To learn more about how Hudson Rock protects companies from imminent intrusions using Ground Truth provenance, and how we enrich existing cybersecurity solutions with our cryptographically verified cybercrime intelligence API (bypassing the ULP trap entirely), please schedule a call with us here.

Thanks for reading, Rock Hudson Rock!